In order to create the ultimate in self-driving cars, we first need to create the ultimate artificial intelligence. But what does that mean?

Last week we took a closer look at autonomous vehicles and how close they are to being production-ready.

While there are different thoughts on when we might reach that point, we believe that it is still some way off simply because of fallback performance. In case you missed that definition, it basically boils down to who or what is to blame in case of an accident.

The result of that is various levels of autonomy, mostly defined by how much driver input is needed. The ultimate goal is Level Five, in which there is no driver and only passengers. And though you may have read about experiments taking place across the globe, none of those cars are running around without a driver behind the wheel.

Strong and weak

It seems even the scientists in charge of creating and defining such things can’t agree. Elon Musk and a few other hyper-intelligent people started the non-profit OpenAI in 2015 to ensure artificial general intelligence was created to the benefit of humanity. We’ll get to the “general” part of that sentence in a while, but for now the most agreed-upon definition of Artificial Intelligence (AI).

The most basic definition of AI is the imitation of human intelligence. It seems like an easy thing to mimic, until you factor in that all of human intelligence includes emotion, reasoning, self-correction, self-awareness and the ability to learn from mistakes.

Because of that, AI generally falls into two categories – strong and weak.

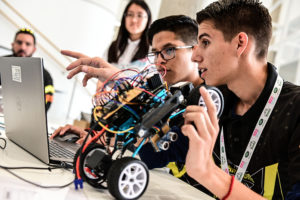

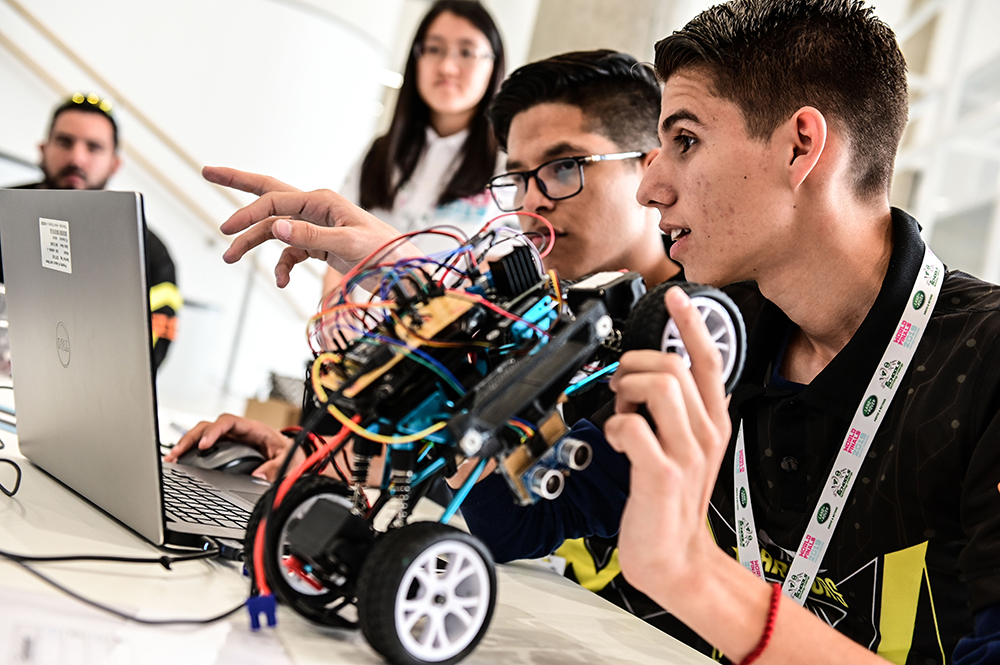

A form of weak AI is most likely within reach of where you are right now. The voice activated personal assistant that came standard with your phone is the perfect definition of weak AI. Though it may seem this function can do a lot, its functional scope is actually rather narrow. Sure, it can navigate you home, or make a dinner reservation or send a message on your behalf, but can it choose between life or death?

That’s where strong AI comes in. Strong AI is basically a machine that is able to find a solution to a problem without human intervention, which means it doesn’t actually exist. The most impressive AI feat thus far was when a machine solved a Rubik’s Cube with a robotic hand.

Strong AI is nothing more than theoretical at this stage. Also known as True Intelligence or Artificial General Intelligence, genuine strong AI would be required to reason, solve problems, plan, learn, execute, communicate and make judgement calls. It’s also required to be objective and sentient (perceive or feel) and sapient (wise). Whether it will ever be developed is controversial. Some think it’s as close as 2035, while others think it will be some time this century. There are those who think it will never come to fruition and those who actively campaign against it.

Artificial General Intelligence

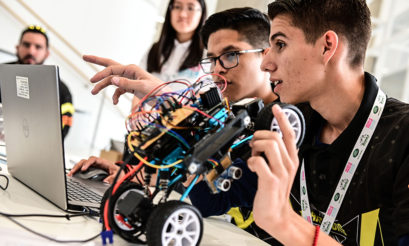

There have been multiple claims of real artificial intelligence over the years. Computers have been taught how to play chess, gamble and even argue on Twitter. In essence, however, these skills are nothing more than a series of ones and noughts programmed by a person. These forms of AI are extremely good at sifting through a lot of data and it can come up with solution, but when you boil it down to basics it’s nothing more than a computer that has been given a set of parameters that were pre-programmed beforehand.

Artificial General Intelligence is the next big step, because it would be equal to human intelligence.

Steve Wozniak (co-founder of Apple) is a big supporter of the coffee test. A machine with intelligence equal to that of a human would be able to go into a strange house and make a cup of coffee. Sounds simple, right? A machine could easily be programmed to do the bunch of individual tasks to complete the mission, but throw them all together and it’s daunting. It would have to guess where the ingredients are, how much to use, etc. These are all tasks that would be easy to the average human.

What about cars?

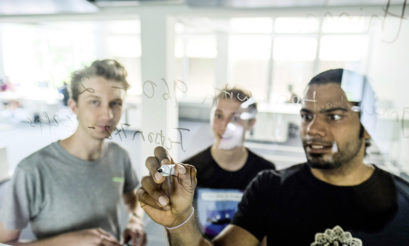

All of this leads us to how AI could be used in cars. The only artificial intelligence currently in use are basic soft systems. The main thing standing in the way is that artificial general intelligence just doesn’t exist and even if it gets to that point, what will the universal moral code be. The last Moral Machine survey conducted in 2018 put 13 scenarios in front of people. In every one at least one person’s life wasput at risk and the results were shocking. Just from a cultural and geographical standpoint the results varied drastically.

Just imagine you were the driver of a car about to be involved in what might be a fatal accident. Do you swerve to the right where an elderly gentleman is walking his dog, or do you swerve to the left where a young jogger is running? Or do you sacrifice yourself and go straight on? It might be an easy answer for you, no matter what it is, but getting a machine to make that choice is anything but.

And it doesn’t seem like that’s going to change anytime soon.